What would happen if you lost all your data?

Imagine what would happen if you woke up to realize all your research data is lost! It is possibly a researcher’s worst nightmare, something some unfortunate researchers have faced in reality. While complete data loss might sound shocking, even more shocking is the way some researchers have admitted to storing their data. Timothy Vines, an evolutionary ecologist at the University of British Columbia in Vancouver, reported in his paper The Availability of Research Data Declines Rapidly with Article Age that researchers have admitted to storing their old data in places such as their parents’ attic, in boxes in the garage, or on now-defunct floppy disks. Such practices can have consequences as grave as complete data loss.

Managing research data effectively is a persistent problem for many researchers at all stages of their career. The criticality of effective data storage is evident through these statistics reported in an article in Nature:

Data output is growing rapidly

- 90% of all the data in the world has been generated over the last 2 years

- Scientific data output is currently increasing at an annual rate of 30%.

Despite significant investment, data is not being managed effectively

- The current estimated total global expenditure on research and development is $1.5 trillion, which could be at risk.

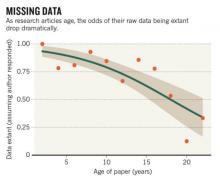

- Much of the data generated is lost – the odds of sourcing datasets declined by 17% each year.

- 80% of datasets over 20 years old are not available.

Research data is lost chiefly because researchers are the only source of their data. Hence, they should store their data securely by using data management tools. Many such tools are widely available such as electronic notebooks, cloud storage services like Google Drive and code hosting sites like GitHub. As Nathan Westgarth, who works at Digital Science, points out in a post, collaborative research across geographical boundaries is becoming more common, and this is causing difficulties in managing research data. The varying levels of technical experience among collaborators, knowledge of different tools available, and the constraints of the lab systems and processes with which researchers need to comply are just some factors that add to the complexity of smooth data management. As a result, many studies become dysfunctional due to the unavailability of data on which they are based.

Apart from researchers, journals too can play a vital role in securing data. Many journals have now made it mandatory for authors to provide the data underlying their studies at the time of manuscript submission, thus ensuring that data is accessible and protected. Data sharing is deemed by many as the right step towards open science as it will protect data and help scientific progress. Research data is priceless, so researchers and journals should join their efforts in ensuring that data is not lost to science forever.

Do you use tools to manage your data? Will sharing data help avoid data loss? Please share your views in the comments section below.