A broader view of research impact through Altmetric

The rapidly evolving academic research and publishing community is seeing many digital developments, one of which is being compared to “the Wild West.” The altmetric movement proposed an alternative to the popular journal impact factor and personal citation indices such as the h-index. From this new frontier, Euan Adie explains some things that you as a researcher ought to consider about research impact.

Euan Adie is founder and CEO of London-based start-up Altmetric, which tracks online activity relating to scholarly literature. Altmetric was founded in 2011, emerging from the altmetrics movement as a side project but becoming a full-fledged start-up after it received Elsevier’s “Apps for Science” Grand Prize award. It is now supported by Digital Science, part of Macmillan Science & Education, which is dedicated to serving researchers by providing software and technology tailored for the scientific community’s needs.

Previously, Euan was a product manager for data management app Projects at Digital Science and a senior product manager at Nature Publishing Group (NPG) where he worked on projects such as Connotea, Nature.com Blogs, and the Nature mobile apps. Before joining the scientific publishing industry, Euan worked in academia as a bioinformatics researcher studying psychiatric genetics, which followed a degree in computer science and a co-founder role in a start-up supported by Edinburgh University’s technology transfer office.

Can you please give our readers an overview of Altmetric and what prompted your interest in such a start-up?

Sure! Altmetric is a set of tools and approaches that you can use to take a broader view of impact. Traditionally, ‘impact’ would be using citations or the Impact Factor to help gauge what the scholarly influence of an article was. But nowadays we also care about other types of outputs – datasets and software, say, not just articles – and other types of impact, be it wider societal impact, economic, or on policy or practice.

In practice this means tools that can pull together indicators of impact and discussion from across the web for a paper. The data collected could be social media, newspaper or magazine stories, data from online reference managers, or things like public policy documents.

I started Altmetric.com (which is one group working in the general field of altmetrics) because it’s an area I’ve been interested in for a long time. I used to work in bioinformatics, where there’s a mix of research and developing software for your lab and others. If you write a piece of software as a researcher, though, there’s no widely recognized way of getting credit for it. You might need to write an application note or similar—which is pretty much a screenshot of the software, a brief description, and a URL—of where to get it. Hopefully, people will then cite that note in their own work.

That’s the first thing that didn’t feel right. The second thing is that citations only provide one measure of impact, which is used by other academics. That always seemed like we were doing a terrible disservice to research that had more of a public engagement or practice component. People who are busy putting research into practice – saving lives, building bridges – are also an important audience, but they aren’t writing papers and citing the evidence they use in their work.

What sets your organization apart from some of the other altmetrics providers?

We’re more nerdy, probably, by which I mean we are primarily a data science company, and many of us came from academic or scientific publishing backgrounds.

We have two things we focus on: (1) making sure the data is as good as it can be, which includes making it auditable and trustworthy, and (2) really paying attention to user experience to make the data as useful and approachable as possible. There’s a tricky balance there between simplicity and nuance. You don’t want to overwhelm people with a flood of data, but over-simplifying can lead people down the wrong path.

How does Altmetric actually locate scholarly activity? Can material without a DOI be tracked?

Yes, we need some sort of stable identifier for the thing that we’re tracking, but that could be a PubMed ID, or arXiv ID, or an identifier from RePEC or SSRN in economics or the social sciences, respectively. We work with a lot of institutional repositories where they issue handles registered with the Handle System.

The stable identifier requirement stems from the disambiguation we need to do – scholarly outputs are typically found spread across the web on many different sites and in different forms. You can imagine a biomedical paper might be found on PubMed as an abstract; PubMed Central as full text; the publisher site as an abstract page, PDF, and full text HTML; and maybe on the author’s institutional repository too.

But in most cases you want details of what the activity is around any of those versions collected in the same place, so we need an identifier to hang everything off of.

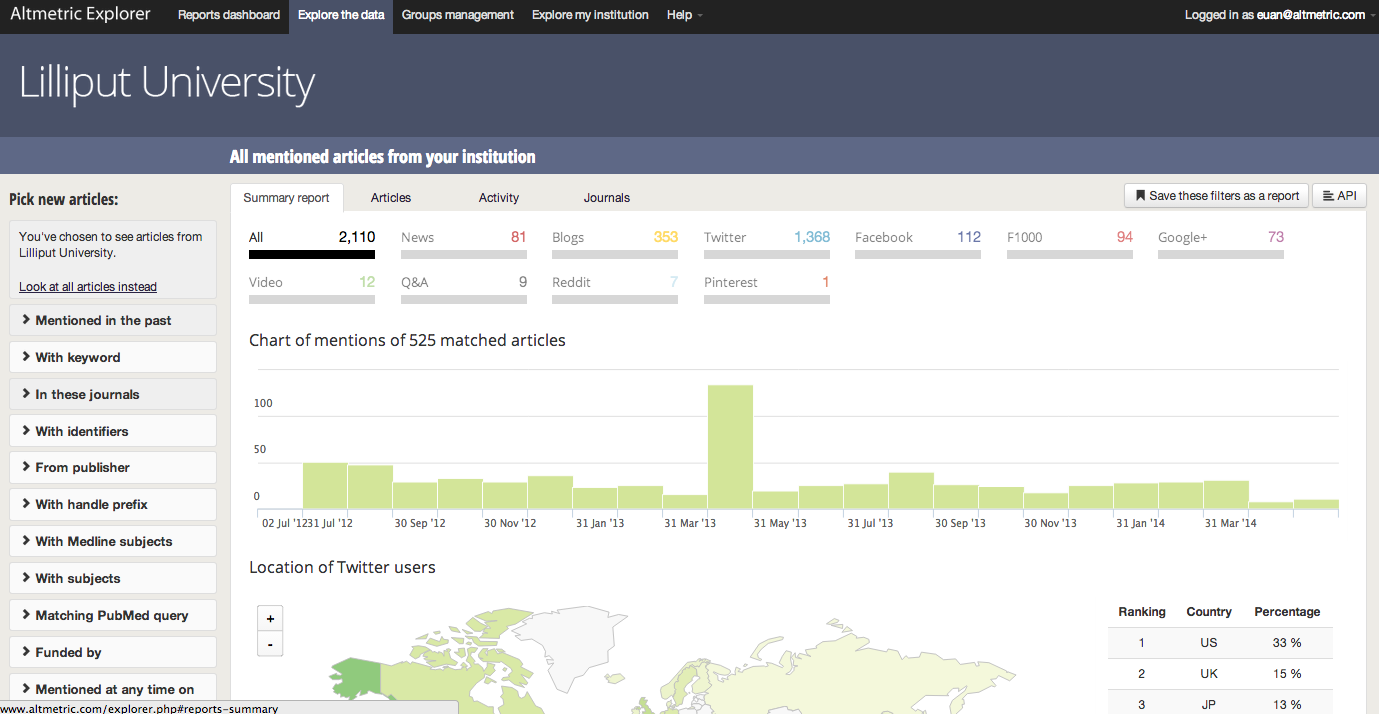

The Altmetric for Institution application allows monitoring and reporting on the reach and impact of research that is limited by traditional citation metrics.

What can you tell readers who feel that negative metrics are just as important?

That’s correct! It all depends on the context. Taking a broader view of impact also means taking a broader view of how the data is used. There are many cases – for readers or for administrative staff at universities – where the primary use case for altmetrics data isn’t assessment but discovery. Which papers about lung cancer have gotten the most media attention? How is the Department of Chemistry affecting environmental policy?

In these cases it’s important to see criticism in the online reaction (if there was any) as well as the good stuff.

Like other metrics, altmetrics provides part of a picture and not the full picture, but together they create a more balanced view. Can you explain this to our readers? Can you guide new users on points to consider when weighing an Altmetric to ensure a truer altmetric value?

We take a very conservative approach, which is I think the right one at this stage. There are many research groups looking at altmetrics data, into what it means and doesn’t mean, where there are accidental biases, and how different fields differ in online behavior when it comes to scholarly output. Until a deeper understanding of the metrics comes out of these kinds of projects, I think altmetrics are best used as an indicator; in the broadest sense of the word, high altmetrics counts indicate that there’s something interesting about a particular article and that you should investigate further.

There are some additional things to bear in mind because altmetrics uses a lot of online attention. Specifically older papers – for us anything before mid 2011 – won’t show much, say, Twitter attention because we weren’t tracking them from the point at which they were published.

Just recently, in April 2014, Altmetric began tracking mentions of academic articles on Chinese microblogging site Sina Weibo, and the data is soon to be fully integrated into existing Altmetric tools. Could you please explain why Altmetric is doing this?

Yes, the Sina Weibo data is now integrated into everything we do, like Facebook and Twitter and some of the other major social networking sites.

We’re very aware that what is true of a researcher or piece of research from the UK is not necessarily true of somebody in Brazil, or China, or any number of other places. We started out by tracking popular Western social media sources, but actually they aren’t always the ones researchers are using elsewhere. In fact, a couple of them are blocked in China completely.

Fixing that has been and continues to be a priority for us. Adding data from Sina Weibo was the first step – in terms of the data it represents, it’s huge, with some 500 million users and a hundred million messages a day.

Some skeptics have concerns that moving away from traditional peer-reviewed journal output will lead to an academic “Wild West” with altmetrics. Can you please respond to this?

I think it’s good to look critically at anything to do with review and evaluation, but I don’t believe altmetrics threatens traditional peer review. If anything, if you look at the altmetrics around—say, arXiv preprints—there’s a lot of scholarly attention made visible that could actually improve peer review, giving reviewers pointers to things they would otherwise miss.

I quite like the Wild West analogy, but with a more positive spin! There’s amazing potential here on the frontier of science online; researchers shouldn’t be afraid to explore and to stake out what they want in terms of how people view their work.

Thank you, Euan Adie.

This interview was conducted by Alagi Patel