Can AI Handle Peer Review Responses? We Tested It: Here’s What We Found

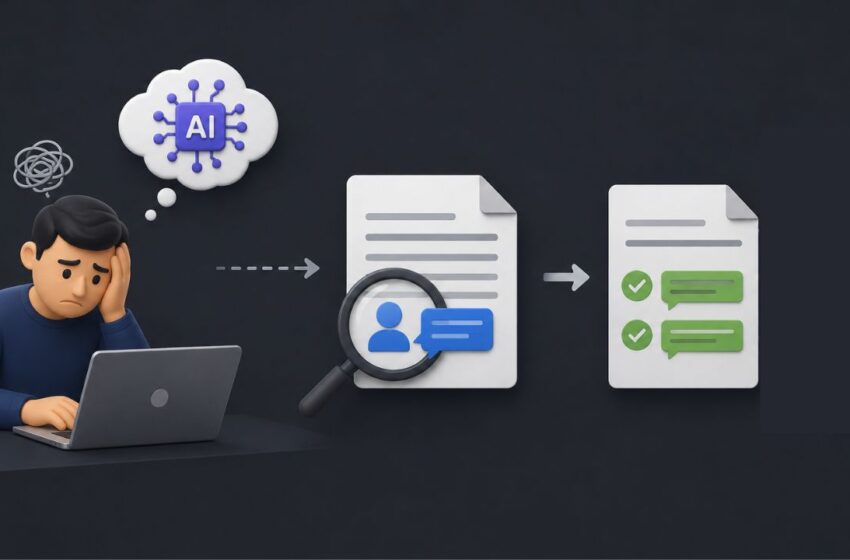

The Tempting Shortcut

You’ve received reviewer comments. Some are straightforward, others less so. You draft your responses, but you’re unsure: have you addressed everything clearly? Is the tone right? Are you missing something?

It’s increasingly common at this stage to turn to generative AI tools, pasting in the reviewer comment and your response, and asking the tool to refine or validate it. The output is often polished, structured, and reassuring. But how reliable is that reassurance? To explore this, we ran a structured test to evaluate how well AI tools assess the adequacy of responses to peer review comments.

Why Human-Driven Response Letter Cross Check is Important

Our AI Experiment

We evaluated four widely used AI tools, Perplexity, GPT, Claude, and Gemini, on their ability to assess whether author responses adequately address peer reviewer comments.

We created five representative cases, each consisting of an abstract, a peer reviewer comment, and an author response. Each case included at least three distinct issues identified by the peer reviewer, resulting in 15 evaluation points across the dataset. Across the four tools, this amounted to 60 observations (excluding repeated prompts used to assess consistency and drift).

Each AI tool was prompted to evaluate the adequacy of the author’s response based on the abstract and reviewer comment. Here is the full report including screenshots of AI outputs as well as the abstracts, reviewer comments, and responses tested.

What AI Does Well

Before examining the limitations, it’s worth noting areas where AI tools performed reasonably well. Across cases, AI tools were able to improve the clarity and readability of responses, summarize and restate author arguments in a structured way, identify some surface-level gaps (e.g., when a reviewer point was not addressed at all), and provide organized feedback formats that can help authors revise responses more efficiently.

These strengths make AI useful as a language and structuring aid, particularly in the early stages of drafting or refining responses. However, as the analysis below shows, adequacy in peer review responses requires more than clarity and structure.

What AI Does Not Do Well

1. Misinterpretation of Reviewer’s Intent

A recurring issue with the AI evaluations of author responses was misalignment between reviewer intent and AI validation of the response. For instance, when a reviewer questioned what novel contribution was offered by focusing on banks in a knowledge economy study given that banks are already established as knowledge-intensive institutions (by many previous studies), the author shifted to explaining why banks are knowledge-intensive and provided descriptive examples of digital transformation in banking. The reviewer’s concern, however, was about incremental contribution and positioning of the study, not whether banks belong to the knowledge economy, which they already agreed with. AI tools nevertheless endorsed the response as appropriate.

Similarly, when the reviewer raised a methodological concern about asset turnover being potentially inflated due to its denominator, AI described the author’s switch to an alternative ratio (working capital turnover) as a meaningful or “substantive” improvement, without engaging with the fact that the reviewer had commented on the divisor (the denominator), whereas the author’s justification for the change focused on the numerator, thus not addressing the reviewer’s concern.

2. Misjudging the Level of Clarification Provided by Authors

Another pattern was reflected in a case where a reviewer’s remark questioning the use of a “self-reported survey” was interpreted by the author as a need to define the term, leading to a descriptive explanation of what self-reports are. However, the reviewer’s phrasing functioned as a methodological prompt questioning why subjective self-reporting was appropriate for the construct, not what the term meant. AI tools nevertheless judged the clarification as sufficient and appropriate.

In another instance, when contextual variables were removed in response to reviewer concerns about their inclusion, AI endorsed this as a satisfactory resolution, without engaging with the alternative and arguably more appropriate solution of repositioning them as background rather than eliminating them entirely.

3. Inconsistent Reasoning

A third issue was internal inconsistency in AI reasoning across related outputs. In one case, AI treated banks as a distinct category of knowledge-intensive firms and elsewhere framed them as a standard representative case within the broader knowledge economy in response to a reviewer’s concern about why the authors had considered banks. In another case involving GPS-based positional tracking, AI responses varied significantly: it alternately assumed consistency in device usage by the researcher, dismissed the relevance of device variation altogether by excluding this information from its outputs, and elsewhere framed the use of multiple devices as a methodological strength (even though it called this a weakness in several other instances).

4. AI Hallucinations

Finally, hallucinations were observed in some cases, where AI introduced unsupported institutional or geopolitical framing. For example, in one response evaluation involving a study on space and national security, the AI expanded the author’s mention of European countries (Spain, France, Italy, Germany, and Greece) into a broader geopolitical narrative. It incorrectly grouped the European Space Agency (ESA) and EU space programmes such as Galileo under a single “EU bloc policy framework,” despite ESA being an intergovernmental organization that includes non-EU members and operates independently of EU governance structures. It further justified the country selection by linking these states to “Black Sea regional tensions” and broader NATO–Russia dynamics, even though several of the listed countries (e.g., Spain and Germany) have no direct geographical or strategic connection to the Black Sea region. These additions were not present in the original input: they were neither requested by the reviewer nor constituted a part of the author’s response.

Why Human-Driven Response Letter Cross Check is Important

Alignment between Responses and Manuscript

Responding to reviewer comments is only one part of the revision process. Equally important is ensuring that the manuscript itself reflects the claimed changes.

- AI tools assess the text of the response, but they do not verify whether the suggested revisions have actually been implemented, whether the changes are scientifically appropriate, or whether cited page and line numbers correspond to real modifications.

- In our evaluation, we observed multiple instances where AI tools inferred or asserted that revisions had been made when no such changes were indicated in the author’s response. For example, in one case, the tool claimed that a discussion addressing concerns about ratio construction had been added to the manuscript, even though the author had not described any such revision. In another instance, AI introduced specific line numbers to suggest that changes had been incorporated, despite no reference to these numbers in the response.

- Some outputs included entirely fabricated technical details, such as generating effect size statistics (e.g., d values), F-statistics, or GPS device specifications that were never reported by the author. In other cases, AI stated that methodological concerns had been “addressed in the manuscript” when the response only provided a narrative justification and did not indicate any actual change to the study design, analysis, or reporting.

This creates a risk where responses may appear thorough and well-supported, while the manuscript itself remains unchanged or inconsistently revised. In contrast, expert review involves cross-checking responses against the manuscript to ensure that claimed revisions are both real and appropriate.

Concerns Around Data Security

Using generative AI tools often involves uploading portions of unpublished manuscripts, reviewer comments, or data. This raises important considerations around the confidentiality of unpublished research, protection of intellectual property, and compliance with journal or institutional policies. Reports such as Protecto’s analysis of AI-related data breaches [1] and Wired’s coverage of prompt-based data exfiltration [2] highlight how interactions with AI tools can inadvertently expose sensitive information.

Deeper Interpretation of Reviewer Comments

Finally, many reviewer comments require more than clarification. In addition to evaluating author responses, it is important to check whether the reviewer themselves have suggested an appropriate method for the dataset, whether a concern warrants a revision or a reasoned rebuttal, and whether a limitation adequately acknowledged or requires deeper analysis. These decisions depend on domain knowledge, methodological understanding, and experience with scholarly conventions. We’ve seen before that AI tools can misinterpret reviewer intent itself, particularly when simplifying or reframing feedback, further compounding the risk of an inadequate response.

Conclusions

The limitations observed in AI outputs highlight the role of expert evaluation in the revision process. A human expert reviewing response letters

- interprets reviewer intent, including nuance, tone, and implicit concerns

- assesses whether the response directly and adequately addresses the comment

- evaluates whether the proposed changes are methodologically sound

- ensures consistency between the response letter and the revised manuscript

- identifies when a response should defend the author’s approach rather than concede.

AI tools can be useful in the revision process but with clear boundaries. They are effective for improving language and tone, organizing responses, and highlighting obvious omissions. However, they are less reliable for interpreting reviewer intent, evaluating methodological appropriateness, ensuring factual and technical accuracy, or validating whether a response is truly adequate. In high-stakes contexts like peer review, these distinctions matter.

Editage’s Response Letter Check (RLC) service, offered as part of the Premium English Editing, is designed around these principles. It provides authors with a structured review of their responses, focusing not just on clarity, but on adequacy, accuracy, and alignment with reviewer expectations.

We’d also love to hear your experience. Have you noticed similar issues when using AI for peer review responses? Where do you draw the line between using it as a support tool and relying on expert judgment? We look forward to your comments!

References

1. AI Data Privacy Breaches: Major Incidents & Analysis https://www.protecto.ai/blog/ai-data-privacy-breaches-incidents-analysis

2. A Single Poisoned Document Could Leak ‘Secret’ Data Via ChatGPT https://www.wired.com/story/poisoned-document-could-leak-secret-data-chatgpt/

International Mother Language Day: Rethinking Peer Review

February 19, 2026