Pre-publication and post-publication issues: A report from the 2017 Peer Review Congress

2017 Peer Review Congress

The Editage team was one of the exhibitors at the Eighth International Congress on Peer Review and Scientific Publication held in Chicago from September 9 to September 12, 2017. For those who were not able to attend the Congress, our team presents timely reports from the conference sessions to help you remain on top of the peer review related discussions by the most prominent academics.

Before lunch on Day 3, studies about editorial and peer review process innovations at NPG, PLOS, and the BMJ captivated the crowd. It was also great to hear from ORCID and their innovations to help reviewers get credit for their peer review activity by linking it to their ORCID profile. After lunch, it was time to discuss pre-publication and post-publication issues on the scholarly communications landscape. Preprints have been a topic of discussion for some time now and some interesting results and discussions during this session on Day 3 highlighted the value of preprints and preprint servers.

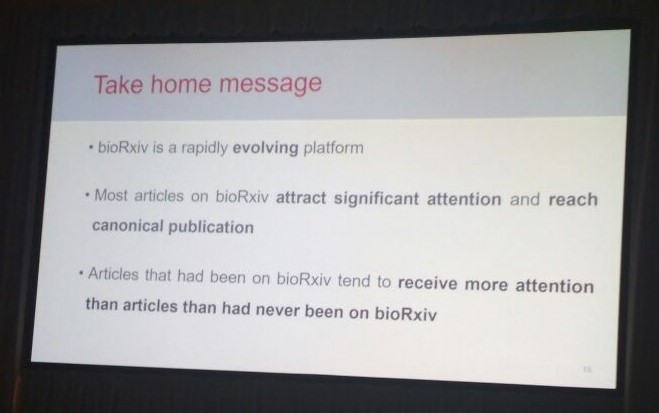

Stylianos Serghiou, from Stanford University School of Medicine, delivered the first presentation of this session. He presented results of a cross-sectional cohort study on associations between bioRxiv preprint traffic, Altmetric scores, and eventually canonical publication in biomedical literature. He started by introducing bioRxiv, the largest preprint server for biological studies and Altmetric, a resource that helps you track the attention that your work receives. The study gathered data for 7769 preprints and concluded that preprints on bioRxiv attracted a lot of non-citation attention and reached canonical publication. Although the Altmetric score did not influence publications, articles that had been posted on bioRxiv tended to receive more attention after being published compared with articles that had never been posted on bioRxiv. So biomedical studies posted bioRxiv received attention, scaled the publication stage, and received even more attention after publication.

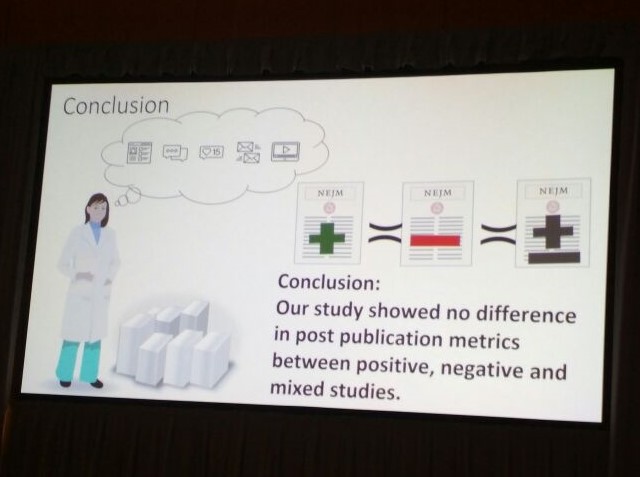

Ramya Ramaswami, from the New England Journal of Medicine (NEJM), then took the podium to discuss readership metrics and media coverage differences between negative, positive, and mixed studies. NEJM is unlike any other journal because they do not put out press releases for studies published in their journals. This reduces their bias for any particular study. NEJM.org tracks and displays metrics on readership and media coverage using 3 online analytic sources: Atypon.com, Crossref, and Cision. An analysis of 338 articles (22% negative, 66% positive, and 12% mixed) published in NEJM revealed that there were no statistical differences in mean page views, citations, and media coverage among positive, negative, mixed trials published in NEJM. This should encourage clinical researchers to publish trials with negative outcomes.

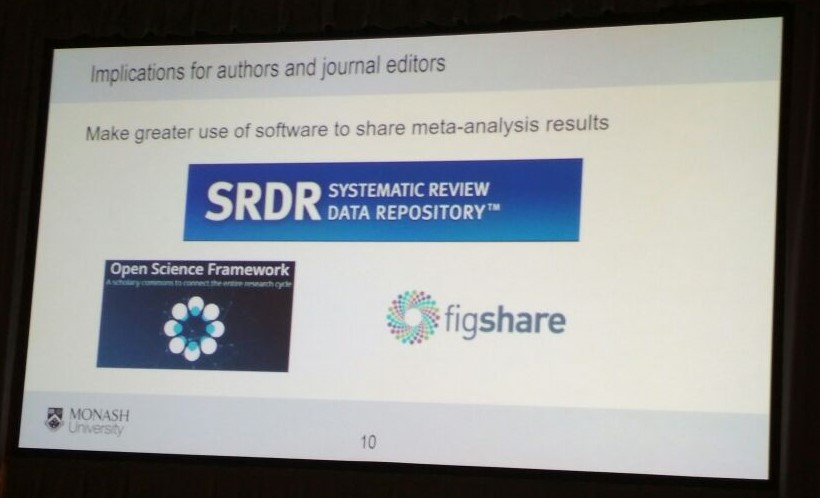

The final presentation of the session by Matthew Page from Monash University presented results of a cross-sectional study about reproducible research practices in systematic reviews (SRs) of therapeutic interventions. An evaluation of 110 SRs led the group to conclude that the reproducible research practices were suboptimal in SRs of therapeutic interventions. They also suggested that authors should use public data repositories such as the Systematic Review Data Repository or Open Science Framework to share data sets and statistical analysis codes for their SRs. This practice would enable others to replicate findings, check for errors or perform secondary analyses. Their study could not even attempt to recreate 27% of evaluated SRs (published in MEDLINE) based on the information available, which is a serious issue. Inconsistencies in SRs and meta-analyses are a major problem in medical literature.

The 2017 Peer Review Congress placed a lot of emphasis on bringing these problems to everyone’s attention. Considering the significant amount of effort, resources, and patient’s time that goes into these studies, it is only natural for a group of scientists to take this problem seriously.

R

Related reading:

- Biases in scientific publication: A report from the 2017 Peer Review Congress

- Research integrity and misconduct in science: A report from the 2017 Peer Review Congress

- Quality of reporting in scientific publications: A report from the 2017 Peer Review Congress

- Exploring funding and grant reviews: A report from the 2017 Peer Review Congress

- Quality of scientific literature: A report from the 2017 Peer Review Congress

- Editorial and peer-review process innovations: A report from the 2017 Peer Review Congress

- Post-publication issues and peer review: A report from the 2017 Peer Review Congress

- A report of posters exhibited at the 2017 Peer Review Congress

- 13 Takeaways from the 2017 Peer Review Congress in Chicago

Download the full report of the Peer Review Congress

This post summarizes some of the sessions presented at the Peer Review Congress. For a comprehensive overview, download the full report of the event below.

An overview of the Eigth International Congress on Peer Review and Scientific Publication and International Peer Review Week 2017_13_0.pdf

Published on: Sep 15, 2017

Comments

You're looking to give wings to your academic career and publication journey. We like that!

Why don't we give you complete access! Create a free account and get unlimited access to all resources & a vibrant researcher community.