Research Integrity in 2026: What’s Changed and Why It Matters

Research Integrity in 2026: What’s New

We revisit a theme that seems to never go away: Research Integrity. As long as there is research, the conversations around integrity and ethics will continue. The larger issues remain the same. However with ever-evolving technology and the rapid pace at which research is conducted and published globally, we felt it would best serve our readers to have a series of articles that deep dive into the current topics around research integrity.

In 2026, research integrity is no longer just about avoiding plagiarism or ensuring proper citation. It has evolved into something far more complex, and far more urgent. Artificial intelligence (AI), is now embedded across the research lifecycle. From conducting research, to analysis and from literature discovery to manuscript preparation and peer review, AI is everywhere.

So, what’s new about research integrity in 2026? And what does it mean for researchers, reviewers, and publishers navigating this shifting landscape?

From Misconduct to Complexity: A Broader Definition of Integrity

Traditionally, research integrity focused on clear violations—plagiarism, data fabrication, falsification, and unethical authorship practices.

In 2026, the conversation has expanded. Integrity now includes:

- Transparency in AI use

- Reproducibility and data traceability

- Responsible authorship in AI-assisted writing

- Accuracy in citations amid AI-generated content

The challenge is navigating gray areas introduced by new technologies.

The Rise of AI as Both Risk and Safeguard

AI has fundamentally changed how research quality is managed. Today, it plays a dual role:

AI as a Safeguard

AI tools are increasingly used to:

- Detect plagiarism

- Flag image manipulation

- Assess data anomalies

- Support editorial screening prior to peer review

In many journals, AI is becoming the first checkpoint for research quality.

AI as a Risk

At the same time, generative AI introduces new integrity challenges:

- “Hallucinated” references

- Over-reliance on AI-generated text

- Difficulty distinguishing human vs AI contributions

- Fabricated data, text or images

This duality defines research integrity in 2026:

The same technology that protects quality can also compromise it.

Human Judgment Is Evolving

Despite rapid automation, human oversight remains central.

Editors and reviewers are no longer just gatekeepers of content. They are increasingly:

- Interpreters of AI-flagged risks

- Decision-makers in ethically ambiguous situations

- Custodians of transparency and accountability

Rather than replacing human checks, AI is reshaping where human expertise is most needed: less on detection, more on judgment.

Policy Changes

One of the most noticeable shifts in 2026 is the growing number of AI-related policies from journals and publishers.

These include:

- Mandatory disclosure of AI use in manuscripts

- Clear statements that AI cannot be listed as an author

- Guidelines on acceptable vs unacceptable AI assistance

- Increased scrutiny of citations and references

However, policies remain inconsistent across disciplines and publishers.

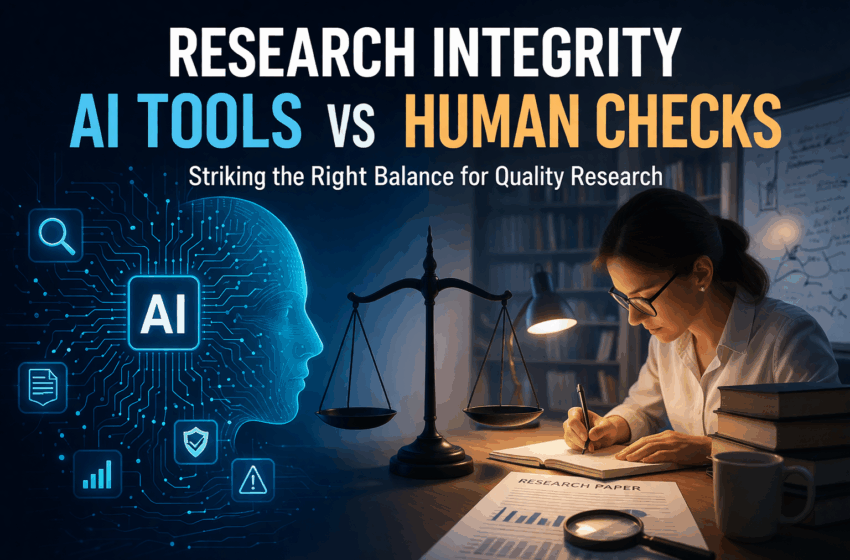

Towards a Hybrid Model of Integrity

If there is one defining feature of research integrity in 2026, it is this:

The shift toward a hybrid model combining AI tools and human judgment.

- AI brings scale and speed

- Humans bring context and accountability

Integrating these two approaches are key to the future – both for maintaining productivity and reputation.

The key question is no longer: AI or humans?

It is: How do we design workflows where both reinforce each other?

Setting the Stage: Conversations That Matter

As research integrity continues to evolve, there are no easy answers: only important questions.

Let us work together to share perspectives that will help unpack what responsible research looks like in this new era.

AI Disclosure: This article was created with the assistance of AI tools for research and outline drafting. It was written, reviewed, edited, and fact-checked by our human editorial team before publication.