A report of posters exhibited at the 2017 Peer Review Congress

The 2017 Peer Review Congress featured 2 poster sessions, one each on Days 2 and 3 respectively of the Congress. Each poster presented a brief overview of an original published research paper. The posters were categorized under 14 broad topics and divided across both days. Some of these topics were Authorship and Contributorship; Bias in Peer Review, Reporting and Publication; Conflict of Interest, Integrity and Misconduct, Quality of Reporting, Data Sharing and Editorial and Peer Review Process amongst others.

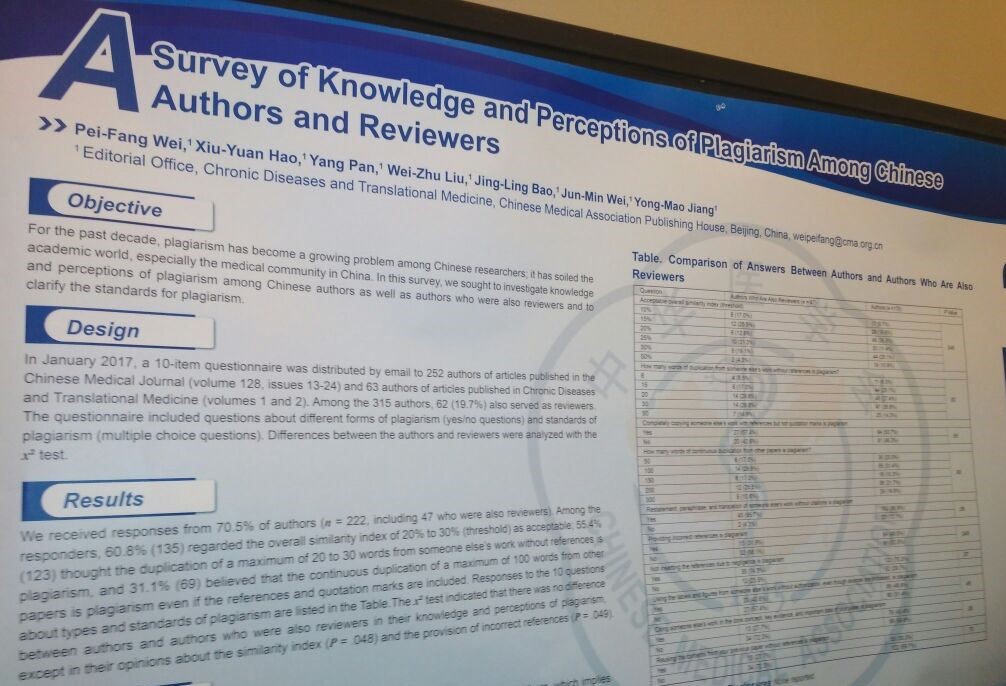

One of the posters on Integrity and Misconduct was particularly interesting – A Survey of Knowledge and Perception of Plagiarism Among Chinese Authors and Reviewers by Pei-Fang Wei, Xiu-Yuan Hao, Yang Pan, Wei-Zhu Liu, Jing-Ling Bao, Jun-Min Wei, and Yong-Mao Jiang (China).

This posters shares results of a survey of Chinese authors (published in the Chinese Medical Journal and ‘Chronic Diseases and Translational Medicine.’) and reviewers about their knowledge and perception of plagiarism. Of the 222 respondents, including 47 reviewers, 135 considered an overall similarity index of 20-30% as acceptable. Over 55% of the authors thought that duplication of a maximum of 20-30 words from someone else’s work without citing references is plagiarism and 31% authors believed that the continuous duplication of 100 words from another paper is plagiarism even if references and quotation marks were included.

The variation in their responses highlighted the need for a unified standard for overall similarity threshold and extensive education about plagiarism among the Chinese academic community. Lack of sufficient knowledge about plagiarism among Chinese authors and reviewers is a problem that needs to be addressed at the earliest. In light of this need of the hour, Editage Insights has launched an in-depth learning course about ethical publication.

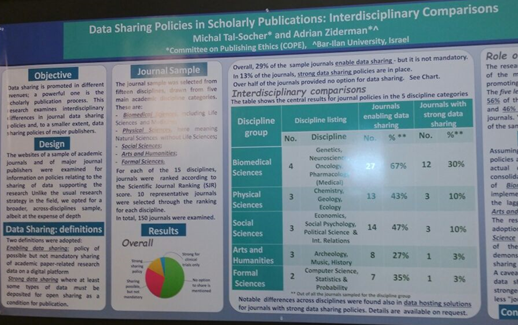

Of the 2 posters presented on Data Sharing, I found the one presented by Michal Tal-Socher and Adrian Ziderman (Israel) – Data Sharing Policies in Scholarly Publications: Interdisciplinary Comparisons – rather interesting.

This study by COPE analyzed the data sharing policies of the top 150 journals from all disciplines. Their results demonstrated the importance of the top 5 journal publishers (accounting for 56% of the sampled journals) in promoting data sharing. Of these journals, 46% of the journals have a strong data-sharing policy. Their results concluded that if journal and publisher policies are an important indicator of data sharing, then biomedical sciences were in the primacy of data-sharing policies, and there was a lagging implementation in the arts and humanities. The physical and social sciences showed similar levels with respect to adoption of norms, whereas the formal sciences demonstrated a lower level of data sharing implementation. The study encouraged the use of other tools to facilitate data sharing that might be stronger than publication policies for less journal-centric disciplines.

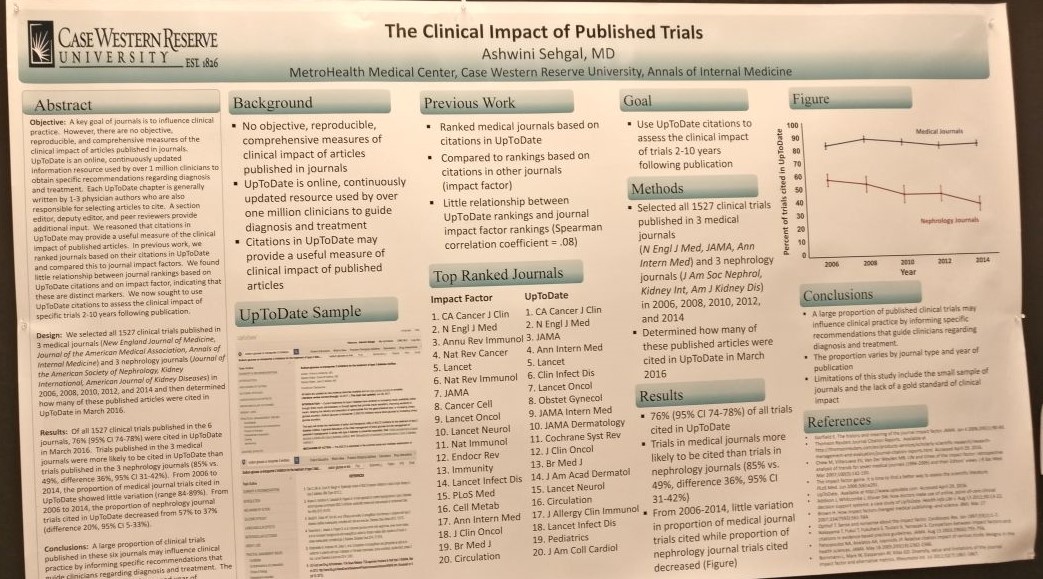

There was also an interesting poster on Bibliometrics and Scientometrics about the Clinical Impact of Published Trials by Ashwini Sehgal. The premise of the study was that citations in UpToDate, an online information resource used by more than 1 million clinicians for obtaining specific diagnosis- and treatment-related recommendations, may prove useful for measuring the clinical impact of published articles.

This study sought to use UpToDate citations to assess the clinical impact of 1527 trials, 2 to 10 years post-publication. The trials were selected from 3 general medical journals and 3 nephrology journals. 76% of these 1527 trials were cited in UpToDate in March 2016. Results indicated that while the proportion of medical journal trials cited in UpToDate showed little variation between 2006 and 2014, the proportion of nephrology journal trials increased from 37% to 57%. They concluded that although a large proportion of these clinical trials might have influenced clinical practice, the proportion varies by journal type and year of publication.

The aim of the Peer Review Congress is to encourage researchers to study the quality and credibility of peer review and scientific publication. And it is through such research papers that we can get a perspective into the functioning of the publication system and then hope to improve processes, conduct and the dissemination of scientific research.

Poster abstracts available

Abstracts of all the posters presented at the 2017 Peer Review Congress are available through the event website. Click here to access them.

Related reading

Related reading:

- Biases in scientific publication: A report from the 2017 Peer Review Congress

- Research integrity and misconduct in science: A report from the 2017 Peer Review Congress

- Quality of reporting in scientific publications: A report from the 2017 Peer Review Congress

- Exploring funding and grant reviews: A report from the 2017 Peer Review Congress

- Quality of scientific literature: A report from the 2017 Peer Review Congress

- Editorial and peer-review process innovations: A report from the 2017 Peer Review Congress

- Post-publication issues and peer review: A report from the 2017 Peer Review Congress

- Pre-publication and post-publication issues: A report from the 2017 Peer Review Congress

- 13 Takeaways from the 2017 Peer Review Congress in Chicago

Download the full report of the Peer Review Congress

This post summarizes some of the posters exhibited at the Peer Review Congress. For a comprehensive overview, download the full report of the event below.

An overview of the Eighth International Congress on Peer Review and Scientific Publication_1.pdf