How to analyze longitudinal data appropriately: Tips for biomedical researchers

What is a longitudinal study?

Longitudinal studies involve the collection of data from the same subjects at multiple time points. These studies play a critical role in understanding the dynamics of health and disease over time. To ensure the validity and reliability of your findings, it’s essential to take specific precautions during statistical analysis.

Which are the best statistical tests for longitudinal data?

The table below summarizes popular statistical tests that are used in longitudinal biomedical research.

| Test/model | Outcome type | Example | Advantages | Limitations | Best for |

| Linear mixed-effects model (LMM) | Continuous | Tracking HbA1c levels every 3 months in a type 2 diabetes cohort with dropout |

|

|

Repeated measures with random individual trajectories |

| Repeated measures ANOVA (RM-ANOVA) | Continuous | Comparing FEV₁ at baseline, 6 months, and 12 months across three treatment arms in a balanced asthma trial |

|

|

Small, balanced designs with few time points |

| Growth curve model (GCM) | Continuous | Modelling individual cognitive decline trajectories (e.g. MMSE score) over 10 years in an Alzheimer’s cohort |

|

|

Modelling individual trajectories over time |

| Generalised estimating equations (GEE) | Continuous Binary Count |

Estimating the population-average effect of a statin on systolic blood pressure across clinic visits in a cardiology registry |

|

|

Population-average effects in cohort studies |

| GLMM — logistic (random-effects logistic) | Binary | Assessing whether HIV-positive patients achieve viral suppression (<200 copies/mL) at quarterly visits over 2 years of ART |

|

|

Binary outcomes with repeated measures |

| McNemar’s test | Binary | Testing whether depression screening status (positive/negative on PHQ-9) changes from pre- to post-intervention in a paired sample |

|

|

Two matched time points only |

| Marginal structural model (MSM) | Binary Continuous |

Estimating the causal effect of time-varying corticosteroid use on bone mineral density in a lupus cohort, where disease activity confounds both treatment and outcome |

|

|

Causal inference with time-varying confounding |

| Negative binomial mixed model | Count | Modelling number of COPD exacerbations per quarter per patient over a 2-year follow-up, with high between-patient variability |

|

|

Over-dispersed repeated count outcomes |

| Poisson mixed model | Count | Counting seizure episodes per month in an epilepsy drug trial with an exposure offset for days at risk |

|

|

Repeated count data with modest dispersion |

| Cox proportional hazards model | Time-to-event | Time from cancer diagnosis to first recurrence in a breast cancer surgery trial, adjusting for age, stage, and receptor status |

|

|

Time to a single event (death, relapse, first hospitalisation) |

| Frailty model (random-effects Cox) | Time-to-event | Modelling recurrent UTI episodes in elderly care-home residents, accounting for unmeasured individual susceptibility |

|

|

Clustered or recurrent event data |

| Competing risks model (Fine–Gray) | Time-to-event | Estimating cumulative incidence of graft-versus-host disease after bone marrow transplant, where non-relapse mortality is a competing event |

|

|

Outcomes where other events preclude the primary event |

| Multi-state model | Time-to-event | Mapping transitions between remission, relapse, and death in a multiple sclerosis cohort over 15 years |

|

|

Complex disease trajectories with multiple states |

| Interrupted time series (ITS) analysis | Continuous Count |

Evaluating the impact of a national antibiotic prescribing guideline on monthly prescription rates across GP practices before and after implementation |

|

|

Population-level impact of an intervention at a known time point |

| Latent class growth analysis (LCGA) | Continuous | Identifying distinct pain trajectory subgroups (e.g. persistent, resolving, delayed-onset) in a post-surgical recovery cohort |

|

|

Identifying distinct subgroups with different longitudinal trajectories |

How to analyze longitudinal data?

Here are 10 key precautions for biomedical researchers conducting longitudinal studies:

Data Quality Control

Implement rigorous data quality control measures to address issues like missing data, outliers, and inconsistencies. Data cleaning is crucial for maintaining data integrity.

Select Appropriate Statistical Techniques

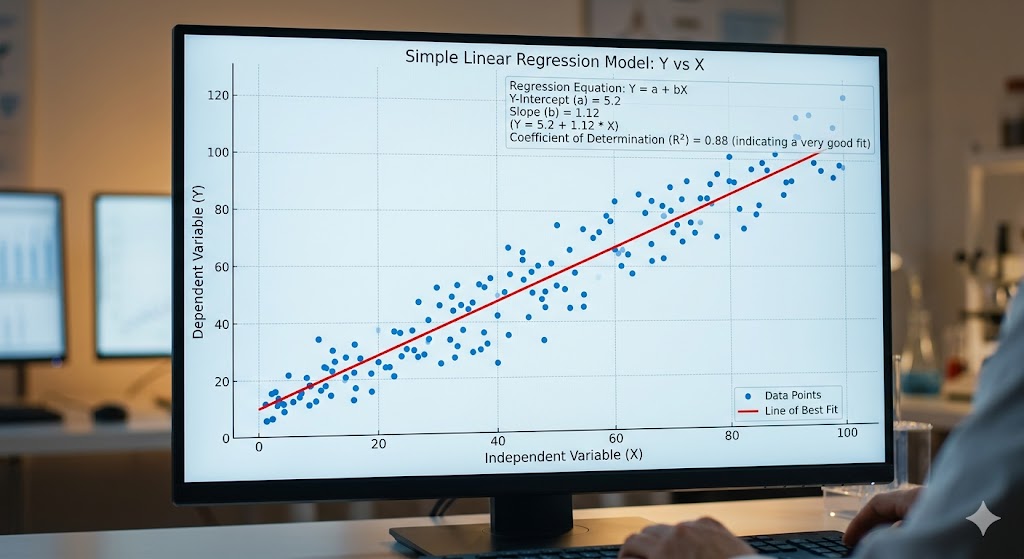

Choose statistical methods that are suitable for longitudinal data, such as mixed-effects models, generalized estimating equations, or growth curve models. Using the wrong methods can lead to biased results.

Longitudinal Data Structures

Recognize the different data structures in longitudinal studies, such as unbalanced, balanced, or irregularly spaced data. Your analysis plan should accommodate these structures.

Account for Time

Time is a critical factor in longitudinal studies. Consider time as a covariate, and assess time trends and patterns within your data. This allows you to explore how outcomes change over time.

Handling Missing Data

Develop a strategy for handling missing data, whether through imputation or other techniques. Be transparent about your approach in your research report to ensure reproducibility.

Multiple Comparisons

Be cautious about multiple comparisons. Adjust significance levels or use methods like the Bonferroni correction to account for the increased risk of Type I errors when analyzing data at multiple time points.

Control for Confounders

Identify potential confounding variables that may influence your results. Include these in your models to ensure the validity of your findings.

Explore Interactions

Investigate interactions between variables, especially the interaction between predictors and time. This can reveal how the relationships change over the course of the study.

Model Assumptions

Check the assumptions of the chosen statistical models, such as linearity, independence, and homoscedasticity. Violations of these assumptions can affect the validity of your results.

Robustness Checks

Conduct sensitivity analyses to assess the robustness of your findings. This involves testing different models or approaches to ensure the consistency of results.

Data Visualization

Use data visualization techniques to explore your data before, during, and after analysis. This helps identify trends, outliers, and potential issues that may require further investigation.

Transparent Reporting

Document your analytical procedures thoroughly and report all relevant details in your research papers. Transparency is crucial for reproducibility and peer review.

Looking for a trusty collaborator to help you design a longitudinal study and analyze the data? Check out Editage’s Statistical Analysis & Review Services.